I got into a conversation the other day about why I, as a massive supporter of the right to online privacy, still tended to use my real name online, in places where a more anonymous handle would be more than acceptable.

You’d have thought as somebody quite proficient at OSINT (Open Source, Intelligence, the art of finding information, particularly relating to people, from public information sources), I’d have taken every opportunity to grab a little anonymity, especially as my real name is almost certainly unique in the world.

It comes down to risk vs reward. Understanding and mitigating the risk is crucial.

If you know my real name (which is pretty obvious from the domain name of this blog) then there is already loads of freely available information out there on me. I bought domain names in the 90s, back when a real postal address was mandatory (they even sent you a physical certificate of ownership) and I used to run a business out of my house, so it was a legal requirement to have my business address on any formal paperwork, so finding my home address is trivial. I couldn’t find anywhere leaking my date of birth online but I’d bet there is some site I’ve entered it into (back before I thought to lie about it) which now leaks it publicly. Similarly, I get so many requests for genealogy info that I’m sure somewhere discloses my mothers maiden name. There are also documents I wrote at University on what is now called Cyber Security with my name on that I now wish didn’t exist, that highlight my security “white hat” has been bleached over the years.

That information is all out there. The genie is out of the bottle, it’s never going back in. So, you’d think that was ever MORE reason to hide my real name online? Not really and it’s all down to understanding and managing that risk.

If I operate under a pseudonym, I have a new risk. The risk of some detail linking the anonymous me to the real me. I’m going to be in the same physical location as my anonymous self, probably using the same computer, browser and internet connection, I’m going to have similar views, knowledge, understanding, frailties and experiences, the same grammar mistakes, the same typing patterns the same mouse movement patterns.

As mass tracking and analysis of both data and metadata becomes easier and more prevalent, the chances of me accidentally revealing a link between my real self and my anonymous self increases and once somebody makes that link, there is no point being anonymous at all.

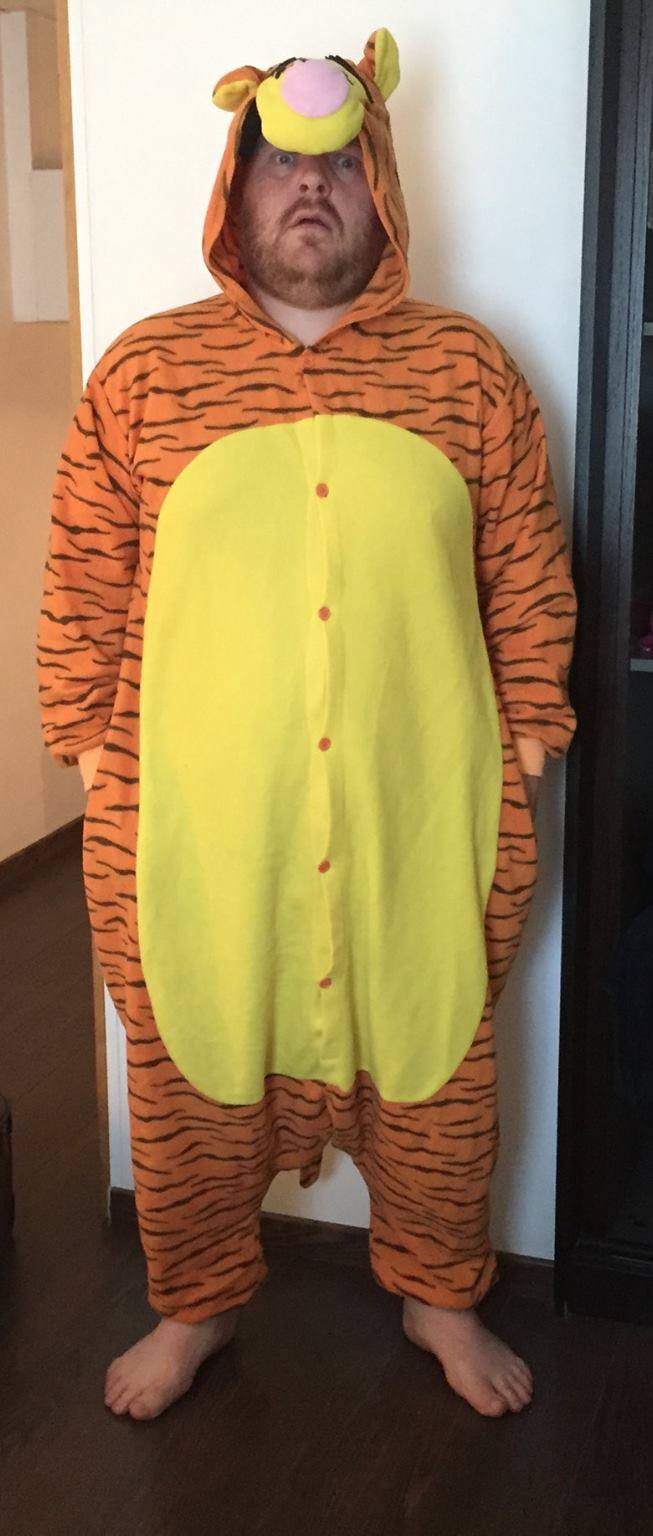

What’s more, the ability to operate under a pseudonym means I’m more likely to reveal additional information than I normally would under my real identity (even if only subconsciously), increasing the risk even further. The instant all the content you wished to keep anonymous is linked back to your real identity you’re essentially stood there with a big sign saying “this is the stuff I didn’t want you to know was by me”.

To further evaluate the risk, you also have to understand that data can last forever and who can access this data over time changes. It’s not about who can see your private content now, it’s about who can see it in the future and then associate it back to you.

Back in 2006, I was in Amsterdam mainly to watch Feyenoord vs Blackburn Rovers, but I also visited the Amsterdam Museum (despite the cliche, not all English football fans in Amsterdam just hang out in De Wallen drinking beer and smoking weed) and read a fascinating but terrifying account of the Nazi occupation of Holland in World War 2. The dutch, quite sensibly, had collected everyone’s religion as part of the census, to ensure that in the case of their funeral being organised by the state, an appropriate ceremony was performed. However, after Nazi occupation, this same list has a whole different purpose.

The details you put online are no different. Just because you trust a website to responsibly keep your private data private, what if they are sold, hacked, pressured by a nation state or have a rogue or sloppy employee?

I therefore operate under the assumption that EVERYTHING I put online can potentially end up in the wrong hands one day.

That doesn’t mean that I instantly post everything public, just because one day people might see it anyway, but it’s always a thought in the back of my mind when I post.

So, I’ve given up on online privacy? Hell No! It’s important to realise anonymity and privacy are not the same thing and the right to privacy is an important right to have, even if I choose to waive it.

Just because I feel one day, a hack, leak or change of government could see my emails/PMs/Skype calls etc being put in the wrong hands, doesn’t mean that I want to share them with everyone right now. It’s precisely because anonymity is mere obfuscation that gives people a false sense of security that I think privacy is so very very important.

For example, my twitter account is public, this is my choice and I know anything I post on there can be seen by the entire world in perpetuity, so it tends to be limited mostly to conversations about tech, football or politics. Facebook however, I have configured to be more private, that doesn’t mean I’ll post anything incriminating or particularly personal, but it will give you more of an insight into my daily comings and going, my social life and particularly upcoming and current events I’m attending. This includes data that may be of some value ahead of time (i.e. to allow you to break into my house, or scam my friends/bank etc into believing I’m stranded abroad without money) but virtually zero value after the fact. Therefore as long as I can trust Facebook to keep that data private for a short period, the risk is much smaller.

But privacy in the modern world is tricky. It’s 16 years since of the launch of PGP and almost 3 years since google announced End to End, but there is still no practical way for me to send an email to any non-technical friends with the belief that nobody other than them will ever be able to read it. End to End (E2E) encrypted messengers like Signal, Telegram, WhatsApp and even Facebook Messenger are great, but do I really trust my phone and computer operating systems enough to be sure the message wasn’t snooped on when it’s decrypted and even if I did, is it reasonable to expect my mum to install a new messenger app, when it’s unlikely I would ever say anything I’d couldn’t be overheard saying to her in the street?

And what of systems that don’t purport to offer E2E encryption? I love slack, but even if their data is encrypted both in transit and at rest as they claim, they can still be decrypted and subpoenaed. The tech simply isn’t there yet to make privacy EASY and that’s the way both corporations (who sell you data) and government agencies (who use is for surveillance purposes) like it.

Which brings us back to risk vs reward. In much the same way to only truly secure a computer is unplug it and encase in in concrete, the only way to stay truly private online is to never be online, However, if you want the rewards being online brings, the have to accept the risks. But, when you understand the risks, you can start to mitigate them somewhat.

There is always a risk and E2E encrypted chat could still be made public, but it’s certainly less risk than some public forum with an unknown operator who may be doing anything with your data to fund their project, even if you are operating under some veil of anonymity. There is a chance the government’s mass surveillance data could be compromised, but it’s much more likely that dodgy service that provides you with free PPV films and sports will have their subscribers details made public. There is chance your slack logs may be subpoenaed, but there is a greater chance you’ll leave your PC or phone unattended and logged into slack.

Risk vs Reward, but make sure you understand ALL the risks. Not just the immediate ones.

My advice – Choose your tools and sites wisely, choose what you say online and who you say it to wisely and work with people like the Open Rights Group and EFF to ensure your right to privacy is a legally protected right.